How to Detect Drift Early in RAG Systems

RAG systems do not fail abruptly — they exhibit progressive drift. The challenge is not recognizing that drift exists, but identifying early-stage deviations in system behavior before they surface in user-visible outputs. In production, these deviations rarely manifest as explicit failures. Instead, they appear as gradual shifts in retrieval relevance, context utilization, and response grounding, while standard system metrics remain stable.

Traditional monitoring signals — latency, throughput, error rates — are insufficient for detecting this class of degradation. RAG pipelines can maintain stable infrastructure performance while experiencing semantic performance decay. The system continues to produce fluent responses, but the alignment between query, retrieved context, and generated output weakens over time. Detecting drift therefore requires instrumenting the system at the retrieval and generation layers, not just the infrastructure layer.

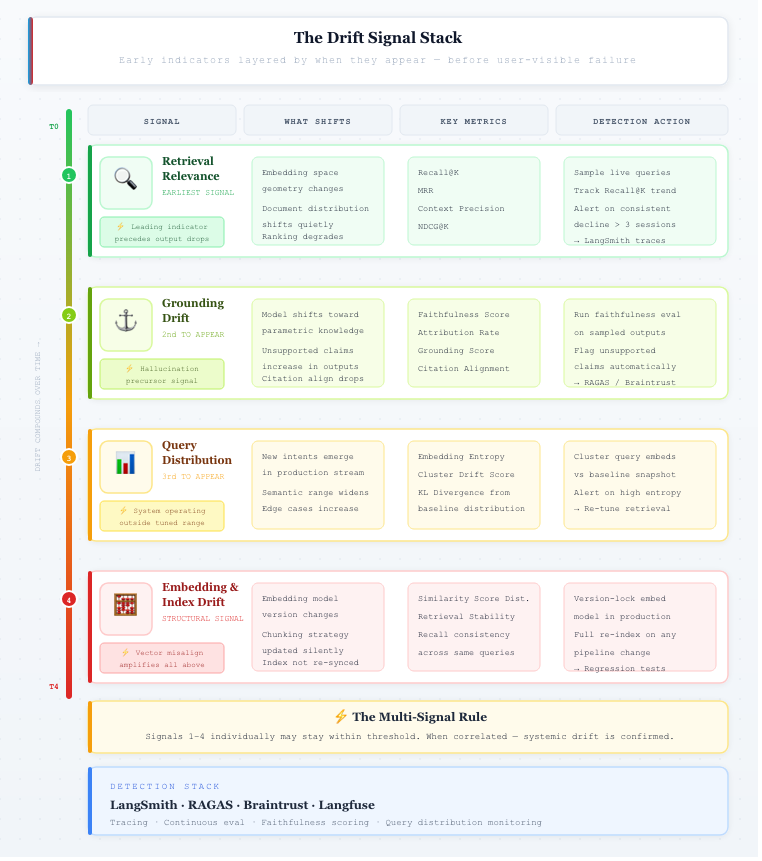

One of the earliest indicators is retrieval relevance degradation. This can be measured using ranking and recall-oriented metrics such as Recall@K, Mean Reciprocal Rank (MRR), and context precision. A consistent decline in these metrics — even within a narrow range — often signals upstream changes in embedding space, document distribution, or query composition. Importantly, this degradation typically precedes observable drops in answer correctness, making it a leading indicator rather than a lagging one.

A second critical signal is grounding drift, defined as the decreasing dependence of generated outputs on retrieved context. This can be quantified through faithfulness or attribution metrics, which evaluate whether statements in the response are supported by source documents. An increase in unsupported claims or a drop in citation alignment suggests that the model is relying more on parametric knowledge than retrieved evidence — a common precursor to hallucination.

Another important dimension is query distribution shift. Production query streams are non-stationary: over time, they introduce new intents, broader semantic ranges, and edge-case formulations. This can be analyzed using embedding clustering, entropy measures, or distance from baseline query distributions. A measurable shift indicates that the system is operating outside the conditions under which it was originally tuned, increasing the likelihood of retrieval and generation mismatch.

Drift can also originate from embedding and index inconsistency. Changes in embedding models, chunking strategies, or ingestion pipelines alter the geometry of the vector space. Without coordinated re-indexing, this leads to vector misalignment, where similarity scores no longer reflect true semantic proximity. This manifests as unstable retrieval rankings and reduced recall, even when underlying data quality has not changed.

A key characteristic of these signals is that they are low-amplitude and high-frequency. Individually, they may fall within acceptable thresholds. However, when correlated — for example, simultaneous drops in Recall@K, grounding scores, and query distribution alignment — they indicate systemic drift. Effective detection therefore requires multi-signal monitoring, rather than reliance on a single metric.

Operationally, early drift detection depends on integrating continuous evaluation with production observability. Systems built with frameworks like LangChain and instrumented via tools such as LangSmith enable tracing of retrieval, prompt construction, and generation steps. By continuously sampling live queries, logging intermediate artifacts, and tracking metric trends over time, teams can detect deviations before they propagate to user-visible failures.

The importance of tracing becomes clear when observing real system behavior. Using tools like LangSmith, it is possible to inspect how queries are processed end-to-end — from retrieved documents to final responses. Even in cases where the answer appears correct, traces often reveal subtle issues such as partially relevant retrieval or weak grounding. These are early indicators of drift that would not be visible from outputs alone.

Drift Signal Stack:

Signal 1 — Retrieval Relevance (green) — earliest leading indicator, precedes output drops

Signal 2 — Grounding Drift (lime) — hallucination precursor, model shifts to parametric knowledge

Signal 3 — Query Distribution (amber) — system operating outside its tuned range

Signal 4 — Embedding & Index (red) — structural signal that amplifies all the above

Drift is not an anomaly — it is an inherent property of systems operating on evolving data and probabilistic models. The objective is not to eliminate drift, but to detect it at low amplitude, before it compounds. Systems that incorporate early detection mechanisms can respond with targeted recalibration, while those that rely on late-stage signals are forced into reactive fixes.

References

Vector Drift in Azure AI Search: Three Hidden Reasons Your RAG Accuracy Degrades After Deployment - https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/vector-drift-in-azure-ai-search-three-hidden-reasons-your-rag-accuracy-degrades-/4493031

Production RAG in 2025: Evaluation Suites, CI/CD Quality Gates, and Observability You Can’t Ship Without - https://dextralabs.com/blog/production-rag-in-2025-evaluation-cicd-observability

Vector Drift in Azure AI Search: Three Hidden Reasons Your RAG Accuracy Degrades After Deployment - https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/vector-drift-in-azure-ai-search-three-hidden-reasons-your-rag-accuracy-degrades-/4493031