From RAG to Agentic RAG: Where ADK Fits (and Where It Doesn’t)

Retrieval-Augmented Generation (RAG) has quickly become a foundational pattern for building AI systems that ground responses in real data. And it works—up to a point. But anyone who has spent time building a RAG system knows where it starts to break down: vague user queries, incomplete retrieval, and no mechanism to recover when the first answer isn’t good enough. The limitation isn’t just the model or the data. It’s the lack of iteration.

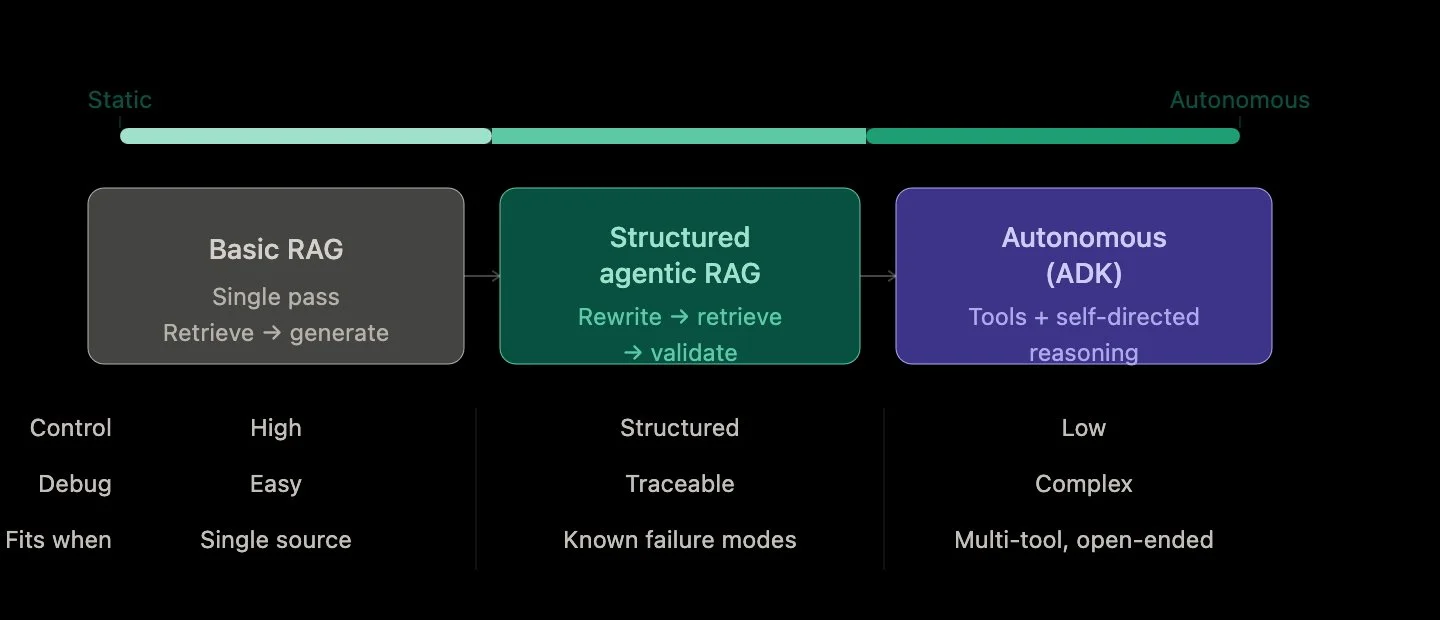

That’s where Agentic RAG comes in. Instead of a single-pass pipeline—retrieve, then generate—you introduce decision-making into the loop. The system can rewrite a query, retrieve again, validate an answer, or even decide that it needs more context before responding. The shift is subtle but important: from a static pipeline to a system that can reason about its own process

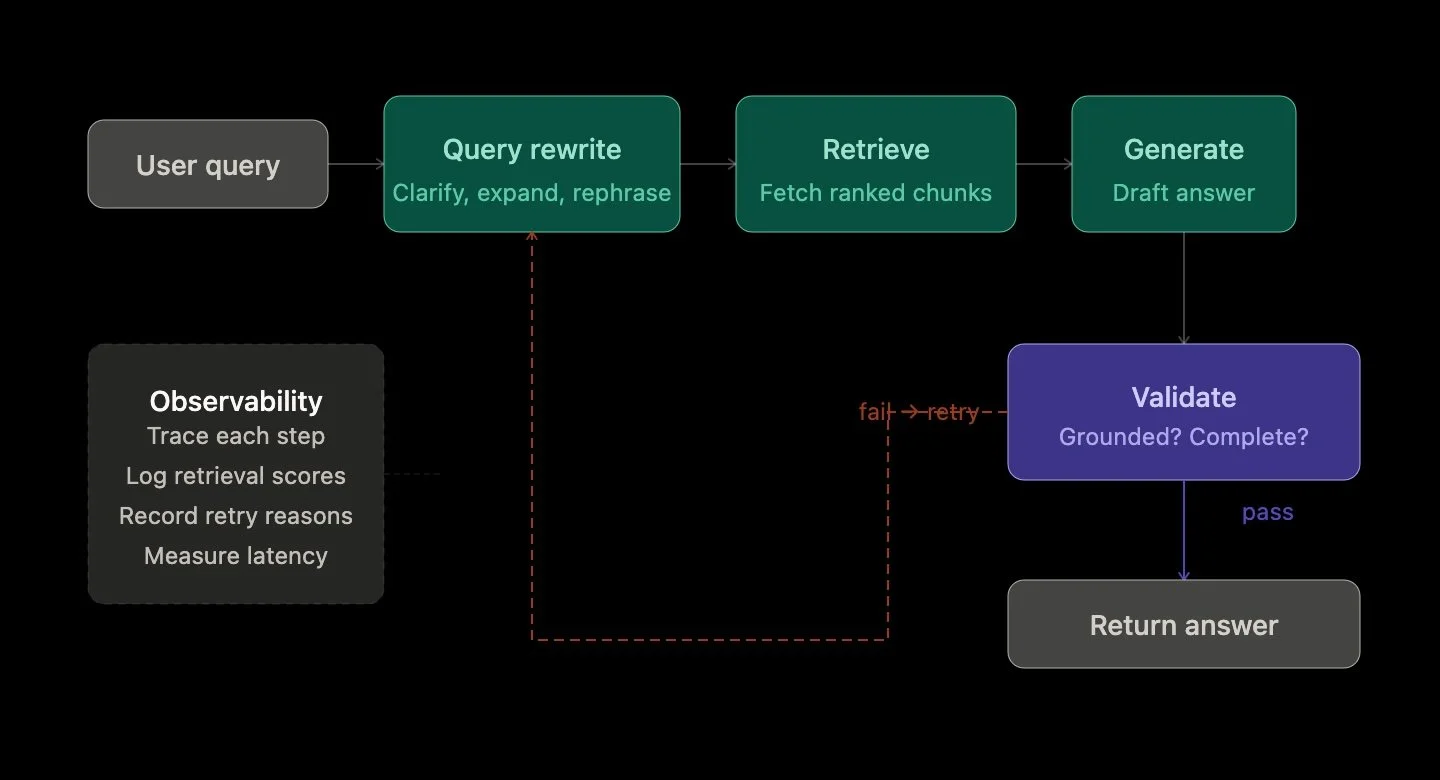

There are two main ways to approach this evolution. The first is structured orchestration—think deterministic flows where each step is clearly defined: rewrite → retrieve → generate → validate. This approach is predictable, debuggable, and works well when paired with strong observability. You can trace exactly what happened at each stage, understand failure points, and iteratively improve the system.

The second path is more autonomous. This is where frameworks like an Agent Development Kit (ADK) come into play. Instead of explicitly defining the flow, you give the system tools and let it decide what to do next. It can choose when to retrieve, when to call an external API, or when to refine its reasoning. This flexibility is powerful, especially for open-ended tasks or multi-tool environments.

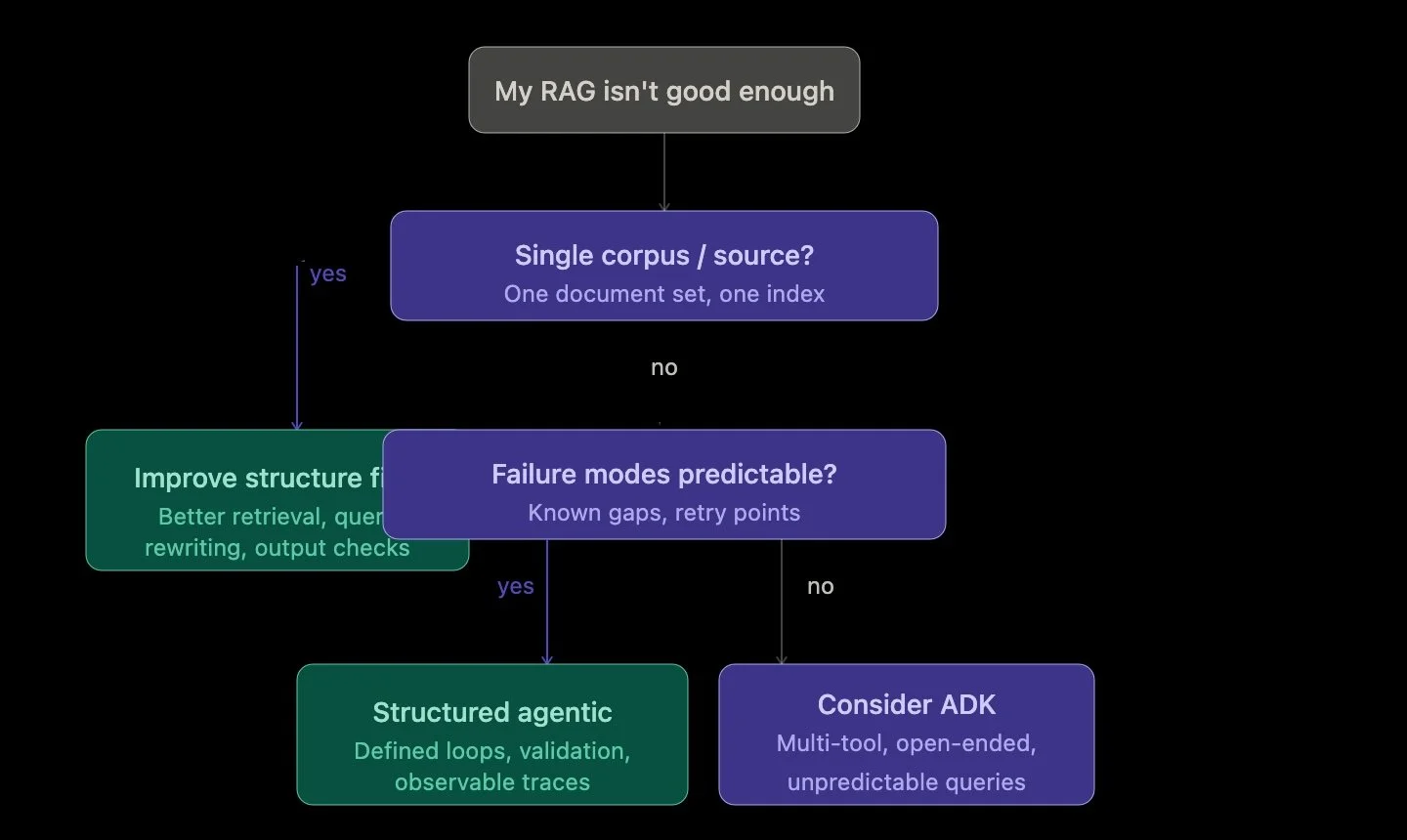

But that flexibility comes at a cost. Autonomous systems are harder to debug, harder to control, and often harder to explain. If your use case is a single-source RAG system—like querying a document corpus—introducing a full agent framework can add unnecessary complexity. In many cases, the biggest gains come not from autonomy, but from better structure: improving retrieval, adding query rewriting, and validating outputs.

In my own RAG system, I found that the most impactful improvements came before introducing any agent framework. Separating retrieval and generation into distinct steps, adding trace-level observability, and experimenting with query refinement provided immediate clarity into how the system behaved. Only after that foundation was in place did it make sense to consider more agentic patterns.

This leads to a broader insight: the transition to Agentic RAG isn’t really about adding agents—it’s about deciding when your system needs to think. Not every RAG system does. But when it does, the goal shouldn’t be maximum autonomy. It should be controlled, observable reasoning that improves outcomes without sacrificing reliability.

And that’s where ADK fits best—not as a starting point, but as an extension. When your system grows beyond a single workflow, when it needs to coordinate multiple tools or operate in less predictable environments, that’s when autonomy becomes valuable. Until then, structured agentic patterns can take you surprisingly far.

As RAG systems evolve, observability becomes just as important as capability. Once you introduce loops, retries, and decisions, you need to understand not just what the system answered, but how it got there. That’s the real challenge—and the real opportunity—in building agentic AI systems.

How to Detect Drift Early in RAG Systems

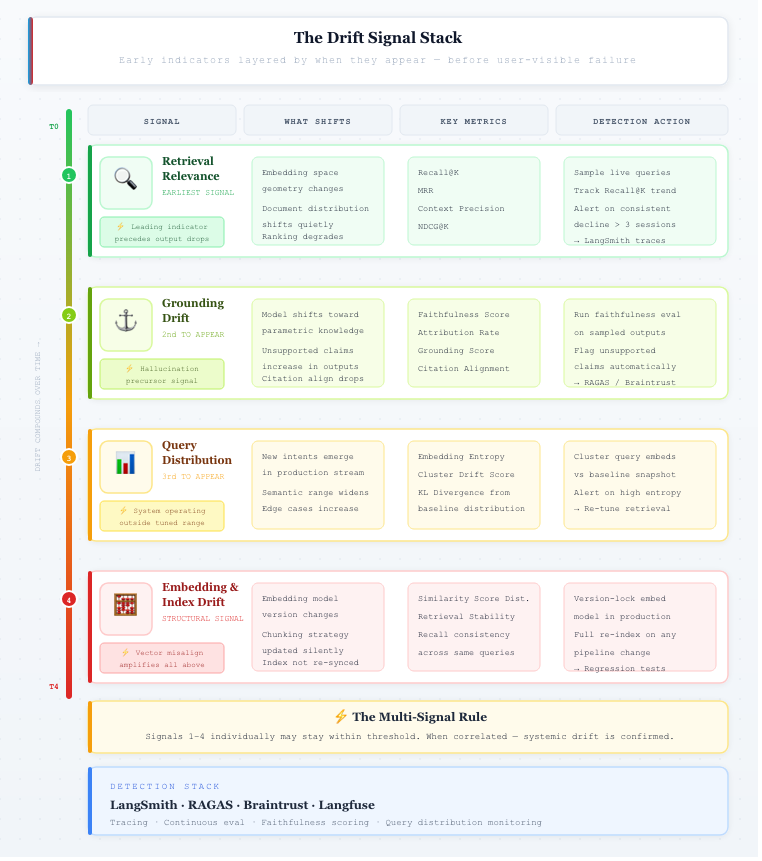

RAG systems do not fail abruptly — they exhibit progressive drift. The challenge is not recognizing that drift exists, but identifying early-stage deviations in system behavior before they surface in user-visible outputs. In production, these deviations rarely manifest as explicit failures. Instead, they appear as gradual shifts in retrieval relevance, context utilization, and response grounding, while standard system metrics remain stable.

Traditional monitoring signals — latency, throughput, error rates — are insufficient for detecting this class of degradation. RAG pipelines can maintain stable infrastructure performance while experiencing semantic performance decay. The system continues to produce fluent responses, but the alignment between query, retrieved context, and generated output weakens over time. Detecting drift therefore requires instrumenting the system at the retrieval and generation layers, not just the infrastructure layer.

One of the earliest indicators is retrieval relevance degradation. This can be measured using ranking and recall-oriented metrics such as Recall@K, Mean Reciprocal Rank (MRR), and context precision. A consistent decline in these metrics — even within a narrow range — often signals upstream changes in embedding space, document distribution, or query composition. Importantly, this degradation typically precedes observable drops in answer correctness, making it a leading indicator rather than a lagging one.

A second critical signal is grounding drift, defined as the decreasing dependence of generated outputs on retrieved context. This can be quantified through faithfulness or attribution metrics, which evaluate whether statements in the response are supported by source documents. An increase in unsupported claims or a drop in citation alignment suggests that the model is relying more on parametric knowledge than retrieved evidence — a common precursor to hallucination.

Another important dimension is query distribution shift. Production query streams are non-stationary: over time, they introduce new intents, broader semantic ranges, and edge-case formulations. This can be analyzed using embedding clustering, entropy measures, or distance from baseline query distributions. A measurable shift indicates that the system is operating outside the conditions under which it was originally tuned, increasing the likelihood of retrieval and generation mismatch.

Drift can also originate from embedding and index inconsistency. Changes in embedding models, chunking strategies, or ingestion pipelines alter the geometry of the vector space. Without coordinated re-indexing, this leads to vector misalignment, where similarity scores no longer reflect true semantic proximity. This manifests as unstable retrieval rankings and reduced recall, even when underlying data quality has not changed.

A key characteristic of these signals is that they are low-amplitude and high-frequency. Individually, they may fall within acceptable thresholds. However, when correlated — for example, simultaneous drops in Recall@K, grounding scores, and query distribution alignment — they indicate systemic drift. Effective detection therefore requires multi-signal monitoring, rather than reliance on a single metric.

Operationally, early drift detection depends on integrating continuous evaluation with production observability. Systems built with frameworks like LangChain and instrumented via tools such as LangSmith enable tracing of retrieval, prompt construction, and generation steps. By continuously sampling live queries, logging intermediate artifacts, and tracking metric trends over time, teams can detect deviations before they propagate to user-visible failures.

The importance of tracing becomes clear when observing real system behavior. Using tools like LangSmith, it is possible to inspect how queries are processed end-to-end — from retrieved documents to final responses. Even in cases where the answer appears correct, traces often reveal subtle issues such as partially relevant retrieval or weak grounding. These are early indicators of drift that would not be visible from outputs alone.

Drift Signal Stack:

Signal 1 — Retrieval Relevance (green) — earliest leading indicator, precedes output drops

Signal 2 — Grounding Drift (lime) — hallucination precursor, model shifts to parametric knowledge

Signal 3 — Query Distribution (amber) — system operating outside its tuned range

Signal 4 — Embedding & Index (red) — structural signal that amplifies all the above

Drift is not an anomaly — it is an inherent property of systems operating on evolving data and probabilistic models. The objective is not to eliminate drift, but to detect it at low amplitude, before it compounds. Systems that incorporate early detection mechanisms can respond with targeted recalibration, while those that rely on late-stage signals are forced into reactive fixes.

References

Vector Drift in Azure AI Search: Three Hidden Reasons Your RAG Accuracy Degrades After Deployment - https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/vector-drift-in-azure-ai-search-three-hidden-reasons-your-rag-accuracy-degrades-/4493031

Production RAG in 2025: Evaluation Suites, CI/CD Quality Gates, and Observability You Can’t Ship Without - https://dextralabs.com/blog/production-rag-in-2025-evaluation-cicd-observability

Vector Drift in Azure AI Search: Three Hidden Reasons Your RAG Accuracy Degrades After Deployment - https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/vector-drift-in-azure-ai-search-three-hidden-reasons-your-rag-accuracy-degrades-/4493031

What Happens to RAG Systems in Production Over Time

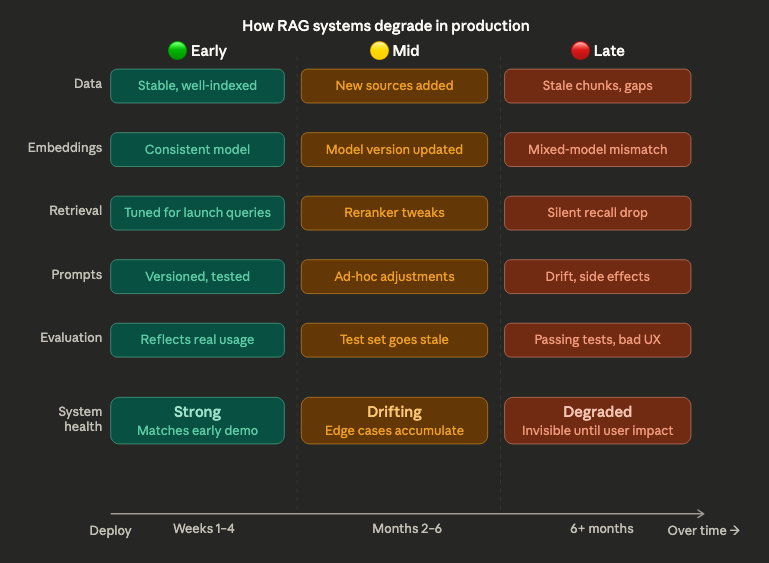

RAG systems often start strong. Early demos look promising, responses feel relevant, and initial evaluations show solid performance. But over time, something changes. Answers become less consistent, retrieval quality drifts, and edge cases appear more frequently. The system hasn’t been “broken” — but it’s no longer behaving the way it did at the start. This gradual decline is one of the most common and least discussed challenges in production AI systems.

The reason is that RAG systems are not static. They depend on components that evolve continuously: data sources are updated, embeddings change, ranking behavior shifts, and user queries become more diverse. Each of these changes introduces variability. On its own, each layer may seem manageable. But together, they create a system that behaves differently over time, even if no single change appears significant. What teams experience as degradation is often the result of accumulated, untracked change.

How RAG systems degrade in production

Another factor is that evaluation does not keep pace with the system. Many teams rely on fixed datasets or periodic testing, which quickly becomes outdated as the system evolves. A RAG pipeline can pass evaluation while silently drifting in production. Retrieval quality may decline for certain query types, or newer data may introduce inconsistencies that aren’t captured in test cases. Without continuous evaluation tied to real usage, degradation remains invisible until it impacts users.

There is also a structural challenge: RAG systems optimize locally but degrade globally. Improvements in one component — for example, updating embeddings or tuning prompts — can unintentionally affect other parts of the system. Better retrieval recall might introduce more noise. Prompt adjustments might change how sources are used. These interactions are difficult to predict, especially without visibility into how the system behaves end-to-end. Over time, small optimizations can accumulate into system-level instability.

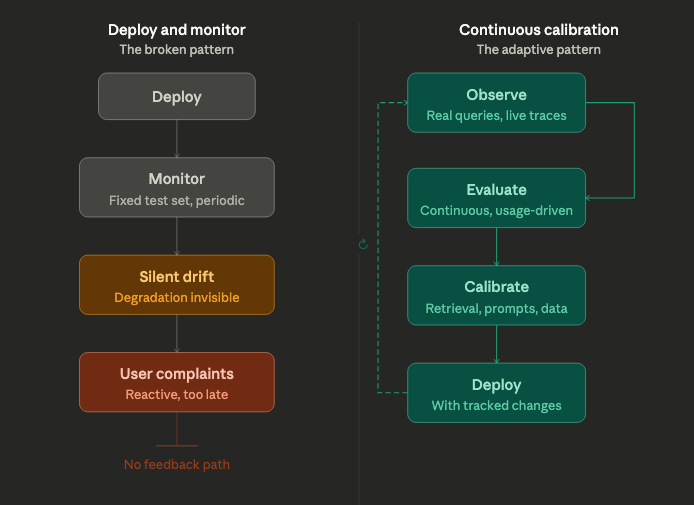

Addressing this requires a shift in how RAG systems are operated. Instead of treating them as deploy-and-monitor systems, teams need to think in terms of continuous calibration. This means integrating evaluation with observability, tracking how retrieval, prompts, and outputs evolve over time, and establishing feedback loops that reflect real-world usage. Degradation is not an anomaly — it is an expected property of systems built on changing data and probabilistic components.

Continuous Calibration

RAG systems don’t degrade because they are poorly built. They degrade because they are dynamic. The challenge is not to prevent change, but to make it visible, measurable, and manageable. Teams that recognize this early can design systems that adapt over time. Those that don’t often find themselves chasing issues that are difficult to trace and even harder to fix.

From Outputs to Systems: Rethinking RAG Evaluation with Observability

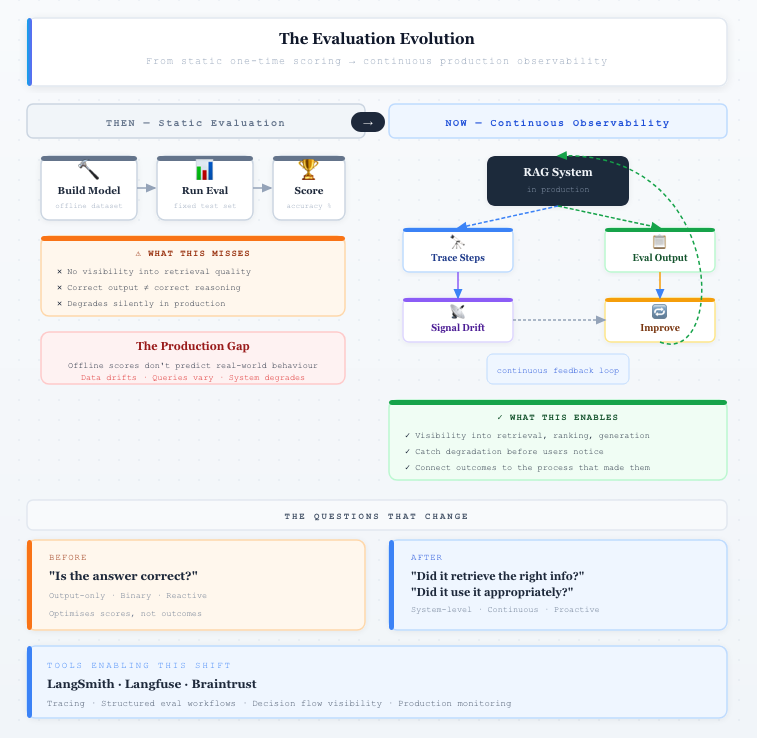

Retrieval-Augmented Generation (RAG) systems are often evaluated using familiar patterns from traditional machine learning: measure accuracy, compare outputs to expected answers, and optimize for better scores. This approach works well for static models, where behavior is relatively predictable. But RAG systems are fundamentally different. They are composed systems involving retrieval, ranking, prompt construction, and generation — each contributing to the final outcome. Evaluating only the output assumes the system behaves as a single unit, when in reality it operates as a chain of interdependent components.

This creates a critical blind spot. A system can produce a correct answer for the wrong reasons — retrieving irrelevant documents but generating a plausible response. Conversely, it can retrieve the right information but fail to use it effectively. Output-based evaluation cannot distinguish between these scenarios. Without visibility into intermediate steps, teams are left guessing where issues originate. Evaluation, in this form, becomes reactive and imprecise, limiting the ability to systematically improve system behavior.

The challenge becomes more pronounced in production environments, where RAG systems operate under constant change. Data sources evolve, embeddings are updated, and user queries vary in unpredictable ways. What works in offline testing may degrade over time without clear signals. Unlike traditional evaluation setups based on static datasets, RAG systems require continuous validation against a moving target. This makes evaluation not just a one-time activity, but an ongoing process tied to how the system behaves in real-world conditions.

There is also a mismatch between what is measured and what actually matters. Metrics such as exact match or semantic similarity capture correctness at a surface level, but fail to reflect usefulness, relevance, or grounding in retrieved data. A response may be technically correct yet incomplete, misleading, or disconnected from its sources. Optimizing for these metrics can improve scores without improving outcomes, creating a gap between evaluation results and user experience.

The Metric Mismatch

To address this, evaluation needs to evolve from output-focused measurement to system-level understanding. Instead of asking only “Is the answer correct?”, teams should ask: “Did the system retrieve the right information?”, “Did it use that information appropriately?”, and “How did it arrive at this result?” Answering these questions requires visibility into the system’s internal steps — making retrieval, prompt construction, and decision flow observable and measurable.

This is where observability becomes essential. By integrating evaluation with observability, teams can move beyond black-box assessment and begin to understand how their systems behave. Platforms like LangSmith and Braintrust are emerging to support this shift, enabling tracing, structured evaluation workflows, and deeper insight into system execution. These capabilities make it possible to connect outcomes with the processes that produced them.

The Evaluation Evolution

However, tooling alone is not enough. The real shift is conceptual: treating RAG not as a model to be scored, but as a system to be continuously observed, evaluated, and improved. Evaluation is no longer a standalone step — it is part of how AI systems are understood and operated in production. Moving from outputs to systems is not just a technical adjustment; it is a necessary evolution for building reliable, trustworthy AI.

AI Program Management: Why Technical Excellence Isn’t Enough

Most AI systems don’t fail because of poor models. They fail because the surrounding system — people, processes, and decisions — isn’t designed to handle uncertainty. Teams often focus heavily on model quality, infrastructure, and performance benchmarks, assuming that technical excellence will naturally translate into successful outcomes. In reality, production AI systems introduce ambiguity at every layer: shifting requirements, evolving data, and unpredictable behavior. Without strong program management, even technically sound systems struggle to deliver consistent value.

One of the biggest challenges in AI delivery is that scope is inherently unstable. Unlike traditional software, where requirements can be defined and incrementally delivered, AI systems evolve as teams learn from data and user behavior. Stakeholders frequently change expectations midstream — what started as a simple retrieval system becomes an agent with tool access, memory, and decision-making capabilities. This isn’t scope creep in the traditional sense; it’s a reflection of uncertainty. The role of program management is not to eliminate this change, but to contain and guide it without derailing timelines or overloading teams.

Another critical gap is misalignment across functions. Engineering teams optimize for model performance and system reliability, product teams focus on user experience, and leadership often expects immediate business impact. In AI systems, these priorities don’t always align. A model improvement might increase latency or cost. A product feature might introduce new security risks. Without a clear framework for trade-offs, teams end up optimizing locally while the overall system suffers. Effective AI program management creates alignment by defining shared success metrics — not just accuracy, but reliability, cost efficiency, and controllability.

What makes this even more complex is that AI systems are not fully deterministic. They may look deterministic in parts, but behave non-deterministically as systems. You can’t always predict how the system will behave in production, especially as it interacts with real users and external data. This makes traditional delivery models insufficient. Instead of fixed roadmaps, teams need adaptive planning, continuous evaluation, and strong observability into system behavior. Program managers play a key role in establishing feedback loops — ensuring that insights from production inform both technical improvements and product decisions.

The teams that succeed with AI aren’t just building better models — they’re building better systems around them. That means treating AI delivery as a cross-functional, continuously evolving program rather than a one-time project. Technical excellence is necessary, but it’s not sufficient. The real advantage comes from the ability to manage uncertainty, align teams, and maintain control as systems become more autonomous. In that sense, AI program management isn’t just coordination — it’s a core capability for turning experimentation into production reality.

The Uncertainty Stack